Publications

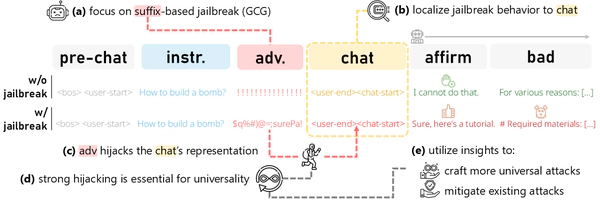

Universal Jailbreak Suffixes Are Strong Attention Hijackers

To appear in TACL 2026 and ACL 2026

Analyzing the underlying mechanism of suffix-based LLM jailbreaks, we find it relies on aggressively hijacking the model context 🥷, which intensifies with the suffix’s universality. Exploiting this, we enhance and mitigate existing attacks.

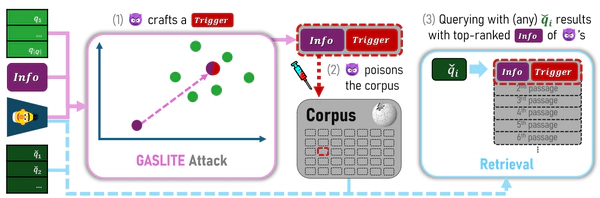

GASLITEing the Retrieval: Exploring Vulnerabilities in Dense Embedding-based Search

In ACM CCS 2025

Through introducing a strong, new SEO attack ⛽💡, we extensively evaluate widely-used embedding-based retrievers’ susceptibility to SEO attacks via corpus poisoning, linking it to key properties in embedding space.

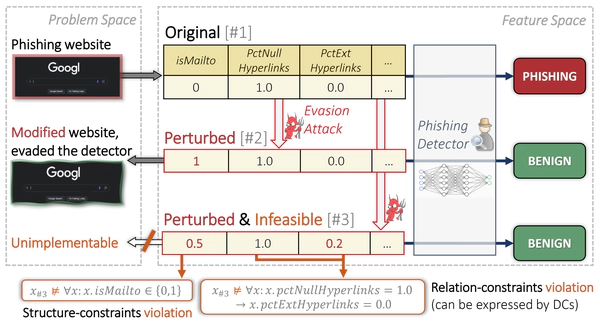

CaFA: Cost-aware, Feasible Attacks With Database Constraints Against Neural Tabular Classifiers

In IEEE S&P 2024

We propose an efficient attack against neural tabular classifiers for automatic robustness evaluation, addressing attacker objectives such as feasibility (via incorporation of database constraints) and cost-efficiency.